If the previous article gave you the “why” of artificial intelligence, this article is going to give you one of the most important pieces of the “how.” You do not need to become a machine learning engineer to use AI effectively. But there is one concept that will come up again and again as you build AI-powered systems, compare different models, and manage your costs. That concept is tokens.

Tokens are the currency of the AI world, both literally and figuratively. They determine how much text an AI model can process, how much it costs to use that model, and how you should think about designing your prompts and workflows for maximum efficiency. Once you understand tokens, a huge amount of the AI landscape that might currently seem confusing will suddenly make perfect sense.

This article will walk you through what tokens are, how they work, what context windows mean, and how pricing is structured across different models. By the end, you will have the foundational knowledge you need to make smart, cost-effective decisions about which AI tools to use and how to use them.

What Are Tokens?

When you type a message into an AI tool, you see words and sentences. But the AI model does not see what you see. Before it can process your text, the system breaks your input down into smaller units called tokens. You can think of tokens as the atoms of language for AI systems. They are the fundamental building blocks that large language models use to read, interpret, and generate text.

A token is not always a complete word, and this is where the concept becomes interesting. A token could be an entire common word. Words like “the,” “and,” “you,” and “apple” are each processed as a single token because they appear so frequently in the training data that the model has learned to treat them as whole units. A token could also be a punctuation mark, like a period, a comma, or an exclamation point. It could be the space between two words. And for longer or less common words, a single word might be split into multiple tokens. For instance, a word like “unbelievable” does not get processed as one unit. The model breaks it into smaller fragments, something like “un,” “believ,” and “able,” resulting in three tokens for a single word.

Why does this matter? Because tokens are what AI models actually “see.” The model does not read your sentence the way you read a sentence, word by word from left to right. It receives a sequence of tokens, processes the relationships between them, and generates its response as another sequence of tokens. Everything that happens inside a large language model, from understanding your question to producing an answer, operates at the token level.

How Text Becomes Tokens: The Process of Tokenization

The process of converting your text into tokens is called tokenization, and it happens automatically every time you interact with an AI model. You never have to do it yourself, but understanding how it works will help you think more clearly about how AI processes your input.

Let us walk through a simple example. Take the phrase “Hello world!” and consider how a model might tokenize it. The word “Hello” becomes one token. The space followed by “world” becomes another token. And the exclamation mark “!” becomes a third token. So a two-word phrase with a punctuation mark results in three tokens. That is the basic mechanics.

Behind the scenes, most modern language models use a technique called Byte-Pair Encoding, or BPE, to determine how text gets split into tokens. You do not need to understand the mathematical details of BPE to use AI effectively, but the core idea is straightforward. During the model’s training process, the system analyzes enormous quantities of text and identifies which character combinations appear most frequently. The most common combinations get assigned their own tokens. Less common combinations get broken into smaller pieces.

This is why everyday words like “the” and “is” each count as a single token, while an unusual word like “unbelievable” gets broken into three. And if you were to type a string of random characters, something like “asdfghjkl,” the model would not recognize any familiar patterns at all. It would fall back to treating each individual letter as its own token, resulting in nine tokens for a single gibberish string. This flexibility is what allows AI models to handle any text you throw at them, whether it is standard English, technical jargon, another language entirely, or even nonsense.

Getting a Feel for Token Sizes

Since tokens do not map perfectly to words, it helps to have some rough mental benchmarks so you can estimate token counts without needing a calculator. These are approximations for standard English text, and they will serve you well in everyday usage.

About four characters of text equal roughly one token. A single word averages about 0.75 tokens, which means a typical word is often less than one full token, though longer or rarer words will be more. Seventy-five words of English text come out to approximately one hundred tokens. Thirty tokens will cover roughly one to two sentences. And one hundred tokens is approximately one paragraph of text.

To put this into perspective, consider a ten-minute video transcript. A person speaking at a normal pace for ten minutes produces around four to five thousand tokens of text. That might sound like a lot, but compared to the capacity of modern AI models, it is a tiny fraction. When you hear that a model has a context window of two hundred thousand tokens, that means it could process the equivalent of roughly forty to fifty ten-minute transcripts simultaneously. A model with a one-million-token context window could handle the equivalent of several medium-length books at once.

The takeaway here is that tokens are smaller than most people assume, and the capacity of modern models is far larger than most people realize. This is good news, because it means you have enormous room to work with when designing your AI-powered workflows.

Context Windows: The AI’s Working Memory

Now that you understand what tokens are and how they are sized, the next critical concept is the context window. If tokens are the atoms of AI language, the context window is the container that holds them. It represents the maximum number of tokens that an AI model can process in a single interaction, and it functions as the model’s working memory.

Here is what gets counted within that context window. First, your prompt, meaning the question or instruction you type in. Second, any conversation history from your current session, because every previous message in a back-and-forth conversation still occupies space in the window. Third, any system instructions that have been configured behind the scenes, such as guidelines that tell the model how to behave or what persona to adopt. And fourth, the AI’s response itself, because the output the model generates also consumes tokens from the same window.

This is an important detail that many people overlook. The context window is not just about how much you can send to the model. It is the total capacity for everything: your input, the model’s output, and all the conversation history in between. If you are in a long back-and-forth conversation, every previous exchange is eating into that same window. Eventually, you may hit the limit, at which point the model either cannot accept new input or begins to “forget” the earliest parts of the conversation.

This is exactly why you may have encountered a message in tools like ChatGPT or Claude telling you that your input is too long. It is not a vague error. It means you have exceeded the model’s context window. The total of your prompt, conversation history, system instructions, and expected response length has surpassed the maximum token count the model can handle at once. When this happens, the solution is usually to break your input into smaller pieces or start a fresh conversation.

Not All Models Are Created Equal

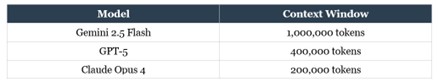

Different AI models come with different context window sizes, and those differences matter significantly depending on your use case. To give you a sense of the current landscape, here is how some of the major models compare. Note: These are just to give you an understanding, as there will be new models and context windows since the writing of this article.

As you can see, the range is substantial. A model with a one-million-token context window can hold vastly more information in a single session than one with two hundred thousand tokens. That does not automatically make the larger model “better” for every task, but it does mean it can handle more complex inputs, longer documents, and deeper conversation histories without running out of room.

Generally speaking, a larger context window tends to come with a higher price tag, though this is not always the case. The relationship between window size, model capability, and cost is something you will need to evaluate based on your specific needs. If you are processing short customer inquiries, you may not need a massive context window, and a smaller, cheaper model will serve you perfectly well. If you are analyzing lengthy legal contracts or feeding the model extensive research documents, you will want a model with the capacity to hold all that information at once.

The key factors to weigh when selecting a model include: the size of the context window relative to your typical input, the speed at which the model generates responses, the cost per token, and the model’s strengths in specific areas like coding, creative writing, or analytical reasoning. No single model is the best choice for every situation, which is why understanding these trade-offs is so valuable.

How AI Pricing Works: Input Tokens vs. Output Tokens

Now let us talk about money, because every token your AI model processes costs something. Understanding the pricing structure will help you budget effectively, avoid unnecessary expenses, and choose the right model for each task.

AI pricing is split into two categories: input tokens and output tokens. Input tokens are everything you send to the model. This includes your prompt, any documents or context you attach, your conversation history, and your system instructions. Output tokens are everything the model generates in response. The text it writes, the answers it provides, the summaries it creates, all of that is output.

Here is the important part: output tokens are significantly more expensive than input tokens. Across most models, you can expect output tokens to cost roughly three to five times more than input tokens. The reason is straightforward. Generating new text requires far more computational effort than simply reading and processing existing text. The model has to calculate probabilities, select each token in sequence, and produce coherent, contextually appropriate language. That process is more resource-intensive than intake.

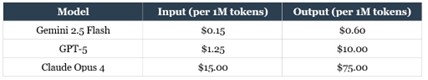

Pricing is typically displayed per million tokens, because individual tokens are so small and inexpensive that quoting per-token prices would involve inconveniently tiny numbers. Here is a comparison of how some major models are priced to give you a sense of the range.

The differences here are dramatic. Gemini 2.5 Flash costs pennies per million tokens, making it extremely affordable for high-volume, less complex tasks. GPT-5 occupies a middle tier, offering strong general-purpose performance at a moderate price. Claude Opus 4, at the premium end of the spectrum, delivers top-tier reasoning and analytical capabilities, but at a cost that demands thoughtful usage.

What this means in practice is that you do not need to use the most expensive model for every task. If you are generating quick summaries, drafting routine emails, or handling simple classification tasks, a lightweight and affordable model will do the job beautifully and save you a significant amount of money. Reserve the premium models for tasks that genuinely require their advanced reasoning, nuanced writing, or deep analytical capabilities.

This tiered approach to model selection is one of the most practical skills you can develop in the AI space. Matching the right model to the right task is not just about getting better results. It is about building systems that are sustainable and cost-effective over time.

Optimizing Your Token Usage

Once you understand that every token costs money and that context windows have limits, the natural next question is: how do you get the most value out of every token you spend? This is sometimes referred to as tokenomics, the art and science of being efficient and economical with your AI usage.

The first principle is to be concise with your prompts. This does not mean being vague or leaving out important details. It means eliminating unnecessary filler, redundant instructions, and excessive preamble. A well-crafted prompt that is clear and direct will get you a better response and cost you fewer tokens than a rambling, disorganized one. Every extra word in your prompt is a token you are paying for, so make each one count.

The second principle is to manage your output length. If you only need a two-sentence summary, tell the model that explicitly. Left to its own devices, an AI model will often produce longer responses than necessary because it is designed to be thorough. By specifying the desired length or format of the output, you can dramatically reduce your output token consumption, which, as you now know, is the more expensive side of the equation.

The third principle is to choose the right model for each task. As we covered in the pricing section, different models have vastly different cost structures. Running every task through a premium model is like hiring a brain surgeon to put on a bandage. It gets the job done, but the cost is absurdly disproportionate to the task. Develop the habit of asking yourself: What is the simplest, most affordable model that can handle this particular job well?

The fourth principle is to be mindful of conversation history. In a long back-and-forth session, every previous message continues to occupy space in the context window and is reprocessed with every new exchange. If you are in a conversation that has drifted far from your original topic, it may be more efficient to start a fresh session with a clean, focused prompt rather than carrying the weight of a long and increasingly irrelevant history.

These principles might seem minor in isolation, but they compound dramatically when you are running AI-powered systems at scale. A business processing thousands of customer inquiries per day, for example, can save substantial sums by optimizing prompt length, specifying output constraints, and routing different types of inquiries to appropriately priced models.

Bringing It All Together

Let us step back and connect the dots on everything you have learned in this article, because these concepts form the foundation you will rely on every time you work with AI going forward.

Tokens are the fundamental units that AI models use to process language. They are not words, not syllables, and not characters. They are statistically derived fragments that the model has learned to recognize from its training data. Common words get their own tokens. Less common words get broken into pieces. Unrecognized text gets split into individual characters. This flexible system allows AI to handle virtually any text input.

Context windows define the total working memory available to a model in any single interaction. That memory must accommodate your input, the conversation history, system instructions, and the model’s output, all at once. Different models offer different window sizes, and that directly affects what kinds of tasks they can handle.

Pricing is structured around input and output tokens, with output costing significantly more than input. The range across models is enormous, from fractions of a cent to tens of dollars per million tokens, and selecting the right model for each task is one of the most impactful decisions you will make as you build AI-powered workflows.

And optimization, being deliberate about how you craft your prompts, how you manage conversation history, how you constrain output length, and how you match models to tasks, is what separates someone who uses AI casually from someone who uses it strategically.

You do not need to memorize pricing tables or token counts. What you need is the mental framework: understand that tokens have a cost, that context has limits, and that efficiency matters. With that framework in place, you are equipped to make informed decisions every time you interact with an AI model, whether you are typing a quick question into a chatbot or designing a complex automated system that processes thousands of requests per day.